Turn around these days and you’re sure to bump into a colleague engaged in some type of assessment project. Workshops, books, and consultants abound to teach us how to formulate and measure learning objectives. If your provost wants benchmarks to justify IT staffing levels, a quick search of Google, Amazon, or EDUCAUSE resources will yield a number of how-to resources to get you started on benchmarking. But what if your unit is asked to develop a strategic plan for campus learning technologies? Sure, you’ll find plenty of books about strategic planning, but how will you know what your faculty, students, and campus really want and need? Should you see what usage data your campus already collects and try to project future needs from that? Should you survey faculty? All faculty? A sample? What about students? Couldn’t you just reuse that survey you ran five years ago?

Assessing campus needs for educational technologies and support is no small undertaking, so ensuring that you collect information that truly will help decision making requires careful planning. Data collection can be time-consuming and expensive. A well-organized educational technology needs assessment should add value to campus IT resources, not simply eat into the budget.

Every few years, the University of Colorado at Boulder (CU–Boulder) undertakes a fairly extensive data-collection effort concerning educational technology, usually about one year in advance of a broader IT strategic planning effort. The last comprehensive needs assessment was conducted in 2005 in preparation for the 2006 strategic plan; another, more limited in scope, is under way in 2008. This article is the culmination of our effort to describe and distill CU–Boulder’s experiences to make them adaptable to other campuses.

CU–Boulder’s 2006 strategic plan included a strong focus on undergraduate education and the effective use of technology in support of the campus’s academic mission. The university enjoys a robust technology environment—a ubiquitous wired and wireless network, technology-enhanced classrooms, and a détente between central and decentralized IT support—but like most campuses, CU–Boulder also has resource constraints, so collecting data to inform our resource allocation decisions is a necessity, not a luxury. The IT environment on campus presents additional planning challenges because support for educational technology is provided by a mix of centralized campus-wide units (central IT, faculty and graduate teaching programs, and libraries) and decentralized support in some academic departments. In 2005, CU–Boulder also had several faculty and IT governance bodies, some of which have subsequently changed.

As we thought about key aspects of the needs assessment process at CU–Boulder, we realized that decisions about what kinds of data to collect and how to collect them were shaped by three types of criteria: research criteria, planning criteria, and shared governance criteria. To ensure that we obtained high-quality data, it was important that we considered criteria for good research. To ensure that we obtained not just good data but usable data, it was important that our data directly addressed questions we needed to answer for planning. To ensure long-term legitimacy and buy-in for decisions that might be implemented as a result of the planning process, it was essential that participation in the data collection process reflected a spirit of shared governance.

Research Criteria

Data quality is important for both practical and political reasons; in academic environments, dissenters often attack the research behind a decision when they lack a more fully formed argument against it. Concern for research quality leads to planning decisions about how to obtain valid, reliable, and trustworthy data. For us that meant

- asking the right questions of the right people and data sources,

- using appropriate sampling and systematic data-collection processes, and

- making sure that those processes were transparent and carried out by staff with research expertise and neutrality.

These requirements led us to work with two units in particular: our institutional research (IR) unit and our information technology services (ITS) unit. IR brought to the table several things that we in the CIO office couldn’t provide on our own, most notably the expertise and tools to collect good data, including:

- Trained research staff

- The ability to sample campus subpopulations

- An online survey application that allowed branching and skips

- An understanding of the campus’s survey cycle, which helped avoid survey fatigue and therefore increased our survey response rate

- The ability to brand data collection as “campus-wide†rather than a specifically IT project, thus projecting greater neutrality

Working with ITS allowed us to undertake data mining within existing data sets, which meant we didn’t have to ask faculty and students for data already available. It also allowed us to verify the representativeness of some survey responses because we could see from the data mining how many people ought to be able to answer certain questions, such as about their WebCT use. A shorter survey means a better overall response rate and fewer instances of missing data on individual questions, thus promoting reliability.

Planning Criteria

Achieving valid, reliable and trustworthy data is only the start. Just because data are generally valid and reliable doesn’t mean they’re useful for making specific decisions. As we planned our data collection, we realized it was important to distinguish among data that might serve different purposes.

- Planning versus Reporting: The easiest data to present to a planning committee are data already collected for other reporting purposes. However, such data seldom rise above descriptive bean-counting that answers only the simplest questions regarding who is using what and how often. Strategic decisions usually require answers to more complex questions: How? Why? Why not? What if?

- Research/Curiosity versus Decision Critical: Complex questions may lead simultaneously to answers for planning and for scholarship about educational technology. When data collection serves this dual purpose, it is gratifying to report findings to a wider community, but the primary goal is to inform campus decision makers. Likewise, while we might indulge in a few curiosity questions, it’s important not to burden campus constituencies with questions that don’t directly relate to decisions we might actually make about technology that supports teaching and learning.

- Planning versus Fishing: Whenever possible, it makes sense to align any type of needs assessment with a corresponding strategic plan from the start. We don’t want to end up culling data, hoping to find information to guide our planning decisions.

Admittedly, our initial plan for data collection wasn’t as strategic as it could have been until our faculty advisory committee for IT suggested an approach called “backward market research.†Alan Andreasen introduced the concept of backward market research in the Harvard Business Review in 1985 to combat the problem of market research that simply told executives what they already knew about their customers without leading to actionable decisions. Backward market research starts by identifying the most likely and pressing planning decisions, and then determining the most promising focus of data collection to inform those decisions.1

Backward marketing research increases the likelihood that data can be used to make resource allocation decisions. It also reduces the number of “curiosity†and “fishing†questions. Business metaphors can be off-putting to some in academe, but we quickly realized that market research and needs assessment have purposes similar enough that we could borrow Andreasen’s backward market research concept without compromising our learning-centered goals.

The backward marketing research approach forced us to think hard about (1) what decisions we wanted to be able to make because they aligned with our strategic plan, and (2) what decisions we could make because they realistically could be implemented within our resource constraints. These parameters may sound too obvious to make much difference, but when intentionally followed, they add clarity and discipline to an inherently messy process.

Taking a backward marketing research approach doesn’t mean we can’t collect data to sate curiosity or contribute to scholarship about educational technology. It simply means that we remain aware of the potential costs and benefits of asking these additional questions of our faculty and students.

Shifting the way we thought about and phrased our questions changed our process dramatically and helped us achieve a more effective and efficient needs assessment. With this perspective, we were able to articulate the overarching decision CU–Boulder wanted to be able to make:

From this overarching decision question we still needed to formulate actual data-collection strategies and specific questions to ask our faculty and students, but by defining our primary question of concern, we could target our data collection more effectively. On the surface, our primary question may seem too broad to help focus our data collection, but let’s contrast CU–Boulder’s decision question with questions that would have led us down very different roads of data collection:

Tacit Principles

As we worked to articulate our central question, we also identified three tacit principles that derive from our understanding of CU–Boulder’s strategic plan. First, the university’s primary goal for educational technology is supporting teaching, not disseminating technology. Second, centrally supported educational technologies should target the “majority†of the faculty. In the language of Everett Rogers, who wrote Diffusion of Innovations,2 we were concerned about faculty members who inhabit the forward-midsection of the bell curve, from the early adopters through a bit of the late majority. At CU–Boulder, we routinely gather information about the needs of innovators, as they are an increasingly important group. However, they weren’t the focus of this particular needs assessment, nor did we go out of our way to collect data about the group of users known generally (although inappropriately) as laggards.3

The third tacit principle is that current options may not be the best or right options. This principle reminds us that CU–Boulder’s goal is to facilitate the use of educational technologies that faculty want to use, not to promote our current suite of options. Therefore, our assessment needed to provide opportunities for faculty to identify technologies they wished to use or were already using without university support. As a result, survey questions were organized first around functions that faculty and students might perform with technology and only secondarily around specific products. Faculty and student surveys also provided opportunities for open-ended responses, and we scheduled focus groups with different types of users to allow for more free-flowing conversation about uses and needs. One of our mottos became “We don’t know what we don’t know!â€

From our overarching decision question and three tacit principles, several strategic questions emerged as most important:

- How can technology improve teaching and learning?

- What would you like to use but currently don’t?

- What would enable that use?

Questions on Technology Use to Support Teaching

Once we organized our thinking about the IT assessment process around strategic decisions and questions, it became clear that the data needed for good decision making was less about numbers of people using current solutions—and also less about their satisfaction with current services—and more about teaching at CU–Boulder and how technology supports that teaching (or doesn’t!). That said, we still needed to frame some questions in terms of applications or services for them to make sense to campus users. We left some common classroom technologies, such as overhead projectors, out of the data collection because the planning team knew CU–Boulder would continue to make these technologies available. This data-collection decision fit our model of backward market research and strategic focusing, but it also led to unintended consternation from some faculty members who misinterpreted the absence of certain technologies from the survey as a sign they might be eliminated. Table 1 shows the type of technology use questions asked and who was surveyed.

| Table 1 | ||

| Technology Use Questions | ||

| Type of Technology | Faculty | Students |

| Learning management systems and online course components | X | X |

| Classroom technologies | X | X |

| Communication technologies | X | X |

| Administrative applications (such as registration) | Â | X |

| Other | X | X |

Although the bulk of our assessment questions were intended to inform resource allocation decisions, CU–Boulder nonetheless asked some “curiosity†or “reporting†types of questions. Like most institutions, we are expected to provide credible and current data to constituents on and off campus who seek information on the scope and scale of our IT resources, services, and uses. The questions included:

- Who’s using what?

- How is it working?

- How could current use—and support of that use—be improved?

One particularly difficult aspect of backward market research has been determining ahead of time how we should weigh the importance of various qualitative aspects of the data against the sheer quantitative strength of responses. Even as we collected our data, we struggled with how much influence to give several factors:

- The number of responses directing us toward a decision

- The passion of responses by a few respondents

- Differences between schools and colleges

- Political position and will of respondents

Ideally, the team would have decided before collecting the data how much weight to assign each of these potential influences. Instead, we simply hoped that articulating them in advance would help us avoid being unknowingly biased by them.

Shared Governance Criteria

Now we come to the third and final set of criteria in our model, those that ensure that we conduct our assessment in the spirit of shared governance. A key to the success of our assessment effort was understanding that a project of this size could not be implemented effectively in isolation, nor could the decisions reached have a broad impact if only a few people were involved.

We chose the term shared governance for an important reason: In the past several years, the postsecondary IT community—particularly in EDUCAUSE but also in the Society for College and University Planning (SCUP)—has expressed concern about faculty members’ scant input in educational technology decisions. Many of us think of educational technology planning as a subset of institutional planning, but it is also a subset of curriculum planning, and curriculum is the purview of faculty. If we wish to achieve greater faculty participation in educational technology decisions, we need to emphasize the existing (or overdue!) rights and responsibilities of the faculty to share governance of educational technology as a prominent feature of the curriculum.

CU–Boulder is reviewing IT governance structures currently. Regardless of a particular campus’s governance structures—or lack of them—we think it is beneficial to conceptualize the educational technology needs assessment and planning process through the lens of shared governance. In the spirit of shared governance, we asked ourselves:

- Who are the key stakeholders?

- Who has needed knowledge or expertise?

- Who needs to participate for political reasons?

CU–Boulder has long involved multiple campus units in campus IT needs assessment projects, and this project was no exception. Our academic technology environment is a mix of centralized and decentralized services and support that includes:

- CIO’s office and CTO’s office, which has designated a separate Academic Technologies unit

- Information Technology Services (central services, support, and operations group)

- Professional development units for faculty and graduate students

- Libraries

- Individual departments

Not all these groups were directly consulted in the research design phase of the project, but all were represented on committees that were. To reach these constituencies, the CIO’s office partnered with IR, ITS, and campus governance bodies.

The practical benefits of working with the IR office cannot be overstated, although the political benefits were also significant. IR’s image of neutrality in campus technology issues—particularly compared to the CIO’s office—was important for reassuring campus constituents that technology decision making was a genuinely open and participatory process. We are fortunate to have a large and active IR department on campus, and over the past several years we’ve established a good working relationship with them that proved invaluable in this project.

We are also fortunate to have a large central ITS unit as a strategic partner in the research project for two key reasons:

- ITS has empirical data about technology use collected through routine monitoring systems and therefore untainted by the response bias in surveys that ask faculty to self-report use.

- ITS can and should influence needs assessment projects because they will incur the greatest impact from any decisions made about central provisioning of educational technology services or support.

At the time of our needs assessment, the CU–Boulder campus had three primary governance bodies that advised the administration about IT: the IT Council, concerned with strategic direction for campus IT including budget priorities; the Faculty Advisory Committee for IT; and an IT infrastructure advisory group. The IT Council and Faculty Advisory Committee were included in discussions about the assessment project because they could provide:

- Political vetting and information dissemination

- Knowledge of CU–Boulder’s educational technology assessment and planning audiences

- A nudge toward strategic thinking

Other faculty governance groups, including the Boulder Faculty Assembly and the Arts and Sciences Council, provided input into the overall strategic planning process, including the educational technology needs assessment.

Assessment Framework

The inclusion of shared governance considerations completes the assessment framework for educational technology planning. To plan the data-collection process, the framework incorporates:

- Good research methods to ensure quality data

- Targeted data collection based on backward market research to ensure data that are useful in planning

- Broad participation to promote a campus culture of shared governance of educational technology resources and services

Data Collection

CU–Boulder used several methods to collect the information needed to make strategic decisions about educational technology. Surveys, focus groups, and data mining provided differing perspectives and differing levels of breadth and depth of information.

Surveys

The campus embarked on an in-depth faculty survey that met two needs: to collect strategic data, and to include faculty voices in the research project. In total, we administered two surveys, one to graduate and undergraduate students and one to faculty. Teaching assistants were asked both student and faculty questions.

The surveys were administered to samples rather than to the entire population. The goal was to increase the validity of the findings by seeking proportional representation from relevant campus subgroups. In both cases, the IR unit provided expertise and a powerful surveying application.

Subsequently, we administered a third survey to all faculty that largely repeated the original faculty survey but with questions added by leaders of the planning committees. The all-faculty survey, although potentially less representative than the carefully drawn sample, ensured that every faculty member had an opportunity to provide input. The decision to administer this second faculty survey reflected not only our commitment to shared governance but also—in roughly equal measure—our reading of political needs.

To survey students, we looked at the population of degree-seeking undergraduate and graduate students who had not requested a privacy designation on their records. The IR staff drew a random sample of 3,000 students, stratified by student level (undergraduate or graduate). The sample included 2,000 undergraduate and 1,000 graduate students. Prior to drawing the sample, the population was sorted on several relevant variables (college, class level, gender, and ethnicity) so that the proportion of students in each category selected for the sample nearly matched the actual population proportion. Then systematic random sampling was used to select students within strata.4

The needs assessment team was more concerned about being able to make decisions based on similarities and differences among schools and colleges than about differentiating instructional technology efforts by faculty rank. For this reason, we stratified the faculty sample only by school and college and by general disciplinary area within Arts and Sciences, and not by faculty track or rank.

Despite incentives for participation (drawings for iPods), the response rate was 33 percent for faculty and 24 percent for students. Low response rates and the high probability of response bias inherent in an online survey about technology use (those who are more comfortable with technology are more likely to respond to the survey) are common limitations to be considered when analyzing campus technology needs.

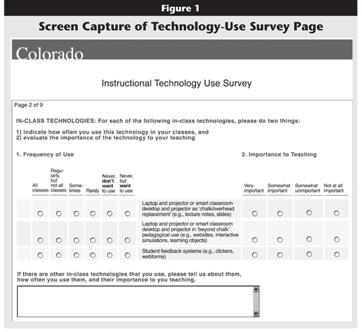

Figure 1 shows a screen capture of one survey page. It illustrates our effort to reduce the survey’s length by using single items to ask participants about their levels of usage and then to rate the item’s importance to teaching. This technique may increase the complexity of the questions somewhat and therefore cast some doubt on responses, but given the characteristics of our respondents, we judged survey length to be the more significant problem.

Click image for larger view.

As at most institutions, computer ownership among students at CU–Boulder is almost universal.5 Therefore, determining student levels of computer ownership was not as critical to our ability to make strategic decisions as was understanding how students use technology (How often do you bring your laptop to class? How often do you use a laptop in class for more than taking notes?). We also wanted to determine if current or intended uses of technologies meet student expectations or preferences, since a gap between student expectations and experiences—if publicized and acknowledged—can be a driver for change in faculty behavior and campus IT priorities.

When asking faculty about their technology use, we asked them to differentiate between “chalk replacement†and “beyond chalk†pedagogical uses. We defined “chalk replacement†as projecting or posting lecture notes or slides; and defined “beyond chalk†uses as projecting or posting simulations, interactive learning objects, or websites. The survey asked them to indicate both frequency of use of the technology and its importance to their teaching.

Nearly three-quarters of faculty respondents reported using laptops and projectors in the classroom as replacements for chalk and overheads, and nearly half of all faculty use them as chalk replacement regularly or all the time. Not surprisingly, nearly three-quarters of faculty respondents also rated chalk replacement as a somewhat or very important use of laptops and projectors. Just over half of faculty respondents reported using laptops and projectors in class for “beyond chalk†pedagogical uses. While only 33 percent of faculty respondents reported using “beyond chalk†technologies regularly or all the time, a full 72 percent of faculty considered this type of use somewhat or very important. Similarly, only 15 percent of faculty used student feedback systems in class regularly or all the time , yet 45 percent considered them somewhat or very important. Perhaps most significant to our planning efforts, 21 percent of faculty wanted to use laptops and projectors in a “beyond chalk†manner but currently did not; and a notable 39 percent wanted to use student response systems but did not.

We also wanted to get a better sense of faculty experiences with educational technologies, particularly how problematic various educational technologies have been for them to use. We suspected that some faculty would like to increase their use of online or classroom tools but find them too problematic to even try. Our data bear out that suspicion: fully 25 percent of responding faculty who use a laptop and projector in the classroom find the technology problematic to use; an additional 12 percent said they had either given up using the technology or had found it too much trouble to even try. This question had a high (25 percent) non-response rate, however. Table 2 shows the results.

| Table 2 | |||||

| Faculty Experience with Educational Technologies | |||||

| Technology | Use It | Found It Problematic | Gave It Up | Problematic—Didn’t Try | Don’t Need |

| Laptop and projector chalk replacement | 71% | 25% | 4% | 8% | 11% |

| Laptop and projector beyond chalk | 60% | 26% | 4% | 10% | 14% |

| Student response systems | 15% | 5% | 4% | 16% | 39% |

| WebCT | 31% | 18% | 4% | 18% | 27% |

| Course website | 54% | 11% | 2% | 1% | 21% |

| Interactive online simulations | 22% | 9% | 3% | 16% | 34% |

Table 3 shows results for our question about online course components. Although there was a low response rate for this question (30–40 percent did not respond), we still gleaned some interesting information from it. Posting of syllabi topped the list of frequently used components, followed by posting of readings. More important for our planning, however, were the high proportions of faculty who don’t use online assignment submissions, interactive online simulations, or online quizzes—but would like to.

| Table 3 | ||

| Faculty Use of Online Course Components | ||

| Online Course Components | Frequency of Use (Want to Use) | Importance to Teaching* |

| Syllabus | 64% | 65% |

| Readings | 55% | 64% |

| Library e-reserves | 17% | 38% |

| Images | 40% | 47% |

| Problem sets | 39% | 40% |

| Online assignment submissions | 18% (26%) | 39% |

| Interactive simulations | 14% (24%) | 33% |

| Quizzes | 13% (23%) | 31% |

Responses to our question about what would enable faculty to use educational technologies more often also revealed potentially strategic information. As expected, faculty who already knew how to use the technology wanted more time to do the work (40 percent), while those who didn’t know how wanted more training or time to learn the skills needed (56 percent). Thirty-one percent wanted the money to pay someone else to do the work for them, and 30 percent wanted departmental or central staff to do the work. Relatively few respondents (27 percent) said that having educational technology use count toward tenure or promotion would make a difference to them.

As good practice in survey design, we included open-ended questions to give respondents a chance to tell us about uses and concerns not addressed in the rest of the survey. This was a way to acknowledge that “we don’t know what we don’t know.†Ironically, many of the open-ended responses told us things we already knew, such as that some faculty still rely heavily on chalk and overheads as pedagogical tools. When we looked more closely at these responses, we realized that our survey might be interpreted to mean we cared about only the more advanced technologies and no longer planned to support lower-tech teaching tools. Our educational technology survey had sent an unintended message to faculty—that we were technology pushers. Our latest version of the survey addressed this potential concern by stating in the introduction that the survey is a focused inquiry about particularly resource-intensive technologies and not a comprehensive inventory of all educational technologies used on campus.

Focus Groups and Working Groups

In addition to including open-ended survey questions, we planned several focus groups to encourage discussion about instructional technology and provide an opportunity for conversation to take us in directions not covered in the surveys. Often focus groups are used to gather information to guide the design of a survey. In this case, our rationale was to augment the results of the faculty survey by seeking in-depth information about particular topics through three focus groups:

- Middle-of-the-Road, to get more in-depth information from “average†instructional technology users

- Cutting-Edge, to better understand the needs and intentions of instructional technology innovators among faculty

- Research Computing, to address a topic not covered by the survey

We had only two questions for these focus groups: What are your teaching/research challenges, and how can IT help you meet them? and—because of the response to the student survey about computer ownership—Should the campus have a laptop requirement or recommendation?

Unfortunately, the focus groups didn’t materialize, in part because of a protest-induced building lockdown that we were unaware of until three focus group times came and went with no participants. Although those focus groups could have added a valuable dimension to the assessment process, we were eventually able to secure adequate faculty participation through the working groups in the strategic planning process. Those working groups provided the opportunity to gather in-depth feedback directly from faculty about such topics as research computing, digital asset management, and emerging technologies. The working groups made the right people integral participants in the planning process.

Data Mining

In backward market research, once you’ve determined what information you need for decision making, you look for existing data that might answer your questions. In CU–Boulder’s project, we mined data routinely collected by ITS. Questions that we were able to answer using existing 2005 data included:

- How many students are served by WebCT, CU–Boulder’s centrally supported course management system? (over 23,000)

- How many courses use “clicker†technology? (about 60, with approximately 4,000 students total)

- What instructional technology questions come through the help desk? (WebCT password problems and urgent classroom technology glitches)

- How many “smart†classrooms are managed centrally? (just over 100)

Final Words

Conducting a campus-wide needs assessment is a lot of work. Starting with clear goals and a good plan vastly increases the probability that the assessment will go smoothly and yield genuinely useful information. Although front-loading your team’s time and energy into the initial planning phase might feel overly bureaucratic or academic, it pays off later when you can return to your overarching decision question and tacit principles as touchstones in moments of disagreement or doubt. Every institution is different—there would be little impetus for needs assessments otherwise—so it’s important to align your plan with your institution’s mission and strategic plan. You also need to be realistic about how technology is viewed in relation to the institution’s identity and aspirations. Planning a needs assessment for an institution that can’t afford to innovate is very different from planning an assessment for an institution that showcases its technology as a prime indicator of its competitiveness.

It’s also important to take into account shared assumptions and values that might not be stated explicitly in campus documents. In particular, pay close attention to beliefs about teaching and learning and attitudes about the role of technology in teaching. Do people on your campus generally believe that technology brings faculty and students closer together? Or that it creates greater distance between them? Does it represent scientific modernity or anti-intellectual shortcuts and diversions? If you’re not sure, or if your campus is split on such assumptions, it is especially important to wordsmith your survey and focus-group questions carefully. You want them to be as neutral as possible to avoid alienating campus constituents.

CU–Boulder used backward marketing principles to arrive at the overarching decision question: How do we invest our limited resources in educational technology to have the greatest impact on the teaching and learning of the greatest number of faculty members and students? In that question, you can hear our attempt to align with tacit principles that call on members of the CU–Boulder community to be fiscally responsible, committed to quality, egalitarian, and learning-centered. Most institutions share those values, but not all institutions would choose this same set over other competing values such as being innovative or expanding access and enrollments through distance learning. Backward marketing principles help anticipate the end use of the data so that you can make sure you have the information you need to make key decisions while avoiding wasted time, raised expectations, or unnecessary anxieties over decisions that can’t realistically be implemented.

Good questions are useful questions, but useful questions don’t, in themselves, yield useful data unless a needs assessment involves the right people in the right processes. If information isn’t collected from a large enough sample that represents relevant campus subgroups, findings might be misleading. Campus constituents need to recognize themselves in the results, and they especially appreciate disaggregated findings that highlight how their own subgroup differs—for legitimate reasons—from other campus subgroups. Such comparisons buoy confidence in the results while also providing a reality check for those whose views may not be as mainstream as they assume. Equally importantly, involving sufficient numbers of participants from key constituencies helps to increase awareness and deepen understanding of educational technology issues. Faculty and students who have been asked good questions about educational technology are more likely to keep thinking about those topics and to provide more thoughtful responses in the future. If a needs assessment process appears slapdash, on the other hand, campus constituents won’t trust the data, and neither should you.

The framework we have described brings together scholarly, technical, pragmatic, political, and idealistic considerations in three broad categories of decision making: research criteria, planning criteria, and shared governance criteria. Keeping in mind our tripartite framework of research, planning, and governance can help you make sure your bases are covered so that your findings are high quality, useful, and perceived as legitimate.

In addition, the following tips may be helpful:

- Know where you’re going (backward marketing research techniques can help with this).

- Make good use of strategic partnerships on campus.

- Employ a mix of data collection methods.

- Aspire to high research standards.

- Take all of your data and findings with a grain (or two) of salt.

- Expect the unexpected.

In writing this article, we were guided by the belief that detailed descriptions of institutional processes are more useful if combined with frameworks that help make them adaptable to differing conditions at other institutions. Likewise, abstract frameworks are more easily understood if illustrated by example. We hope we have combined our framework and examples in a way that will allow others to learn from them and to build on CU–Boulder’s experiences. We’ll know we’ve succeeded if your next campus needs assessment starts with the question “What decisions do we want to make?†and not “Can’t we just run last year’s survey again?â€