Pedagogical Uses of 3D Tech

Nearly a century ago, John Dewey told us that the most effective education is experiential: Learning is achieved through personal experience and doing.1 Almost a century of subsequent educational research has shown that Dewey was correct and that active learning and experiential learning are highly effective. Some disciplines have always been able to engage in experiential education (not that they always have done so, just that they could). Disciplines such as environmental science, electrical engineering, studio art, and others that are inherently hands-on lend themselves to this type of teaching and learning. In other disciplines it is more difficult to engage in experiential education: In some it is a practical challenge to get hands-on experience (e.g., construction engineering, medicine, and law); in others the objects of study are inaccessible except by proxies and models (e.g., molecular biology, urban planning, and theoretical physics). One of the most important features of 3D technology is that it enables experiential teaching and learning in many disciplines where it would otherwise be challenging or impossible. 3D technology can make the invisible visible, the inaccessible accessible.

Modeling the Real

Perhaps the most straightforward use of 3D technology is to re-create objects and spaces that exist in the real world, but do so in virtual environments.2 There is, however, not much point to simply re-creating the real in the virtual; for this type of use case to be worth the effort, there must be more to it.

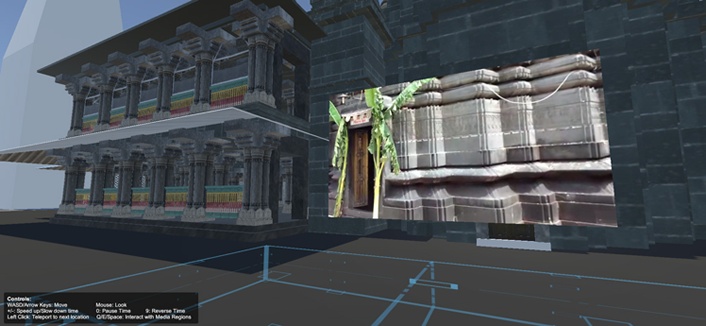

One use case of this type is re-creating historical sites. Some of the earliest work in AR and VR in educational settings was done at the Institute for Advanced Technology in the Humanities at the University of Virginia in the mid-2000s. In particular, the Digital Roman Forum and Rome Reborn projects were efforts to re-create locations in ancient Rome as accurately as possible from historical records and to enable users to "walk through" VR-like digital models.3 The Sacred Centers in India project at Hamilton College is in this vein.4 This project examines the multiple layers of the history of 55 important shrines within the Hindu pilgrimage city of Gaya through textual, archaeological, and art-historical remains. Along with other work, this project has developed a VR walkthrough of the Vishnupada Temple (figure 3), complete with integrated photographs and videos. While these projects re-created the real in the virtual, they re-created real spaces that are inaccessible, either because they are distant or because they no longer exist. In either case, these projects enable historical research and archaeology that would not otherwise be possible.5

Image courtesy of Digital Humanities Initiative at Hamilton College

Another use case of this type is modeling spaces that exist in the real world and then manipulating those spaces in ways that are difficult or impossible outside of VR. A project at Gallaudet University is exploring this type of modeling in a unique context. Gallaudet is a school primarily for deaf and hard-of-hearing students. While one generally thinks of VR as simulating visual environments, a project at Gallaudet is using VR to simulate auditory environments. The audiology department at Gallaudet is experimenting with modeling complex auditory environments such as a noisy restaurant, a traffic intersection, and a corn maze. Hard-of-hearing individuals with hearing aids must work with an audiologist to tune and program new hearing aids, and often this involves multiple appointments: The individual must go out into the world and experience different auditory environments, come back to the audiologist, and tune the hearing aids iteratively. Modeling different auditory environments in VR would potentially enable an audiologist to tune an individual's hearing aids in a single appointment. This project at Gallaudet is similar in concept to a virtual walkthrough, though it might be more accurate to call it a virtual "hear-through." Such a use case re-creates the real in the virtual, but in such a way as to speed up a task and make it more convenient.

X-Ray Vision

The Sacred Centers in India project, in addition to a virtual walkthrough, has developed functionality to enable the VR user to peel away surfaces of the Vishnupada Temple to observe the layers constructed over the course of its history. Again, while this project re-creates the real in the virtual, it re-creates the real in a way that adds value, enabling a form of historical investigation that would not be possible in the real world.

Another example of virtual spaces enabling the manipulation of objects in ways beyond what is possible in the real world comes from medicine. A human anatomy lab course at Hamilton College uses commercially available VR simulations of organs within the body (figure 4), as well as functions and diseases of those organs. While these applications (Organon and YOU) enable the manipulation of generic anatomy, the Immersive Tools for Learning Basic Anatomy project at Yale goes a step further. This project enables the simulation in VR of individualized anatomy by converting the outputs of medical imaging devices such as MIR and CT scan images into 3D objects and environments.

Image courtesy of Benjamin Salzman, Hamilton College Library & Information Tech Services

Anatomy simulations also re-create the real in the virtual and by doing so enable teaching, learning, and experimentation with objects in ways not feasible in the real world. Medical students conduct dissections, for example, but cadavers are expensive, relatively rare, and, importantly, can only be used once; a virtual dissection enables a student to practice the same technique multiple times. Furthermore, some diseases and conditions are quite rare, and a medical student may never have the opportunity to see an organ with a specific condition; a virtual organ enables every student to see and treat even the rarest diseases.

All the Light We Cannot See

In addition to developing a VR dissection simulation, Yale's Immersive Tools for Learning Basic Anatomy project plans to develop AR overlays that can be used during real dissections. A dissection, like any surgery, is a challenging learning environment: There is usually only one lead surgeon, so not everyone may get to have an active hand in the dissection, and the organs being dissected are not labeled. A VR simulation provides everyone with the experience of dissection, and an AR overlay makes clear what everyone is looking at, leading to better use of a rare and expensive learning engagement.

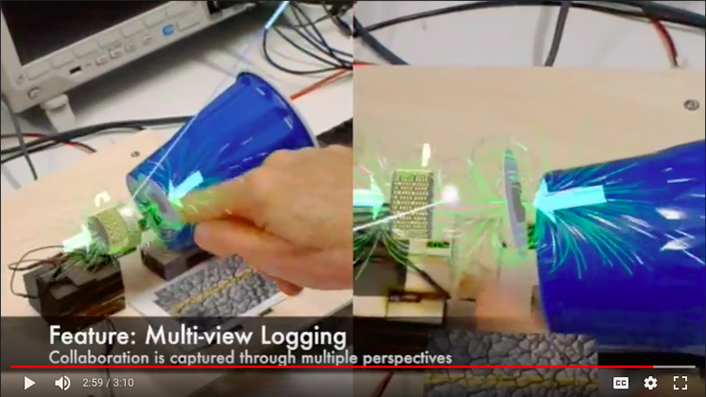

Similarly, researchers at Harvard are developing AR overlays for building electronics. Generally, when building a circuit board, for example, one has to use a tester, which is a separate device from the object being built. Harvard researchers are developing an AR electronics tester that allows one to see sensor values and diagnostics overlying the object being built. This is but one set of AR functionality enabling the user to see overlays of segments of the electromagnetic spectrum outside the visible range. Figure 5 is a screenshot from a video of a Hololens AR project, which shows magnetic fields and electricity from audio speakers.

Image courtesy of Bertrand Schneider, Iulian Radu, and the Harvard Graduate School of Education

The Very Small and the Very Large

The range of sizes of objects with which humans come into regular contact spans only about five orders of magnitude (a grain of rice is about 1 cm in length; even very large buildings are less than a kilometer in length). The range of sizes of objects that humans can comfortably manipulate with their hands is even smaller.6 An important use of 3D technology, therefore, is to enable interaction with objects that are very small or very large.

To work at the very small end of the scale, a materials science student at Lehigh University built a VR model to enable the visualization and manipulation of atoms, cells, and enzymes. This VR model replicates an actual lab on campus in which, among other things, research is ongoing on synthesizing modular biomaterials for tissue engineering and regenerative medicine (for example, growing an organ on a scaffold with architectures and spatially organized functionality that resemble native biological tissues, which could then be transplanted into a human). This model enables atoms, cells, and enzymes to be expanded to a size large enough to be manipulated, like a virtual version of the ball-and-stick chemistry sets in high school science classes. The user can virtually walk through a scaffold and manipulate individual atoms or enzymes to see their effect on cell growth. These scaffolds can then be 3D printed and used in the offline world.

Scale of course is not binary: Something small enough to benefit from using VR to simulate manipulating it could have constituent parts with a wide range of sizes. A project at the MIT Scheller Teacher Education Program is developing a VR walkthrough of a cell in which the objects within the cell are represented appropriately scaled: Organelles are a fraction the size of the cell, enzymes and proteins are a fraction the size of organelles, etc. This type of scale-accurate visualization is very difficult to convey in other media, such as print or even video,7 but it's perfectly feasible in VR, because it is possible to zoom in and out to better see and manipulate these subcellular objects.

At the other end of the scale, the very large—buildings such as the Vishnupada Temple and cities such as ancient Rome—are far too large for an individual to manipulate. But just as atoms and cells can be expanded to a manipulable size, so too can buildings and cities be reduced. 3D scanning and modeling is becoming increasingly common in the cultural heritage sector, particularly in museums and digital libraries, for both documentation and preservation. A notable example is the partnership between the Google Cultural Institute and CyArk, working to create a collection of 3D scans of archaeological sites.8

Beyond even cities, VR enables users to interact with and manipulate entire environments. At Lehigh, a project is under way to develop VR simulations that allow users to explore the Lehigh River watershed and its unique geological formations. At FIU, a project called Community was developed for a first-year experience course in the College of Communication, Architecture + The Arts. This project requires an interdisciplinary array of students to manipulate, in VR, a plot of land that is under 18 inches of water—a hypothetical site in the Everglades where industrial damage has occurred. This project involves multiple small-scale construction tasks for students across the many disciplines in the college and requires the manipulation of an entire environment containing many objects with various properties. Such manipulation would be well beyond what would be possible in the physical world, and certainly within the span of a semester. The VR project invites students to discuss and explore personal values while they think about their role in the collective. During the course of the semester, in which they build in VR on their own lots and in the community space, they are asked to address questions about the environment, ethics, morality, the origins of government and politics, and what it means to learn and grow together within a university community.

Design

For projects such as the Sacred Centers in India, the object being studied must be as realistic as possible in order to be useful as an object of research. Other projects, however, benefit from the ability to change objects and to manipulate them in ways that may be difficult or impossible in the real world.

VR is used extensively for the visual arts. Students at FIU have designed jewelry in VR, creating shapes and using materials that might be difficult or prohibitively expensive to work with in the physical world. Similarly, arts faculty have experimented with VR sculpture, which then can be 3D printed. At FIU, one arts faculty member is experimenting with 3D scanning and printing as a "creolization" of sculpture.9 At Hamilton, students in a literature course created an AR book as a "hybrid object" by 3D scanning book art objects and using those scans as AR overlay images in a book that the class wrote and designed.

In the biomaterials lab at Lehigh, an important use of VR is to enable interaction with objects of varying sizes. Also at Lehigh, as well as at Hamilton College, theater departments are using VR to design models of stage sets and lighting schemes. At Lehigh, students in a course on digital rendering build VR models that the actors and director can walk through prior to those sets' being built. This allows them to diagnose how the sets and lighting will work in the offline space of the stage—just as in any architectural design work.

Architectural design relies on the use of space, its properties, and the objects it contains. Not only can VR enable interaction with objects of varying sizes, it can also enable the properties of objects to be changed in ways that would be impossible in the physical world. At FIU, for example, architecture students use found objects to inform their design. Students have been known to simply go outside and pick up natural objects, scan them using a 3D scanner, and manipulate the resulting digital model as a component of a larger design project. At Yale, architects have employed VR as a tool to aid in the design of a multi-unit, all-gender bathroom, enabling the experience of the visual and acoustic qualities of the space.

Also at FIU, VR and 3D printing are being used more systematically in a course on e-commerce offered by the architecture department. In that course, students design a product and 3D print the prototype to explore how VR experiences might enhance the consumer experience of product design in e-commerce. In a service economy in which value is co-created between the provider and the customer,10 this approach to product design has the potential to dramatically change the nature of customization.

Similarly, two instructors, one at Hamilton and one at Harvard, have developed assignments for their courses that require students to design toys in VR and then 3D print them. At Hamilton College, students design and create toys in a psychology course on lifespan development. At Harvard, students interview children and then design and create a "dream toy" in a course on digital fabrication and making in education. Both projects involve designing an object in VR, either by using "canned" 3D models of shapes or by scanning 3D objects, which are then manipulated. The designed object is then 3D printed.

Collaboration

Virtual spaces enable the manipulation of objects in ways beyond what an individual user can do in the real world. Virtual spaces also enable a degree of collaboration beyond real-world possibilities. Just as Google Docs, for example, has changed how collaborative writing is done, so too do VR and AR environments enable collaborative manipulation of digital models. At FIU, 3D models are imported into game engines such as Unreal, making these models multiplayer, just like a VR game.

One such multiplayer environment is the Community project at FIU, described above. Students must build a shelter for themselves, paths, and communal spaces out of blocks that have various acoustical and aesthetic properties. This project is a large-scale architectural design-and-construction task requiring the manipulation of an entire environment and many objects within it. But beyond that, it is an exercise in community building, stewardship of the environment, communications, and the ethics of design and technology.

On a smaller scale, VR can also foster interpersonal collaboration. The MIT project discussed earlier, in addition to developing a scale-accurate VR walkthrough of a cell, has another component: teamwork. In this project, something is wrong with the cell, and the VR user must fix it from within the simulation. The VR user, however, can see only what is in front of her, just as if she were really there; a second individual has a tablet and sees a large-scale overview of the cell. As in the VR game Keep Talking and Nobody Explodes, these two individuals must communicate continually and effectively in order to fix the cell.

Collaboration is leverage, amplifying what it is possible to accomplish. An important upshot of the use of 3D technology is that it encourages novel collaborations. This is of course a common occurrence with the application of technology to new areas. The advent of digital humanities, for example, encouraged collaboration between computer scientists and historians; computer-aided design fostered collaboration between artists and programmers; the list goes on. In the context of deploying 3D technology, IT units and centers for teaching and learning are almost compelled to collaborate to provide support for faculty wishing to integrate this technology into their teaching. Beyond that, however, use of 3D technology has fostered interdisciplinary collaborations between students and faculty across academic boundaries: visual arts and computer science, medicine and media studies, bioengineering and game design. This sort of interdisciplinarity is extremely fruitful for both research and development, especially if the institution has an office for technology transfer (or some campus unit with a more or less equivalent name and function). Interdisciplinarity is also something that institutions of higher education strive to create, because it leads to a vibrant and active campus environment.

Community Outreach

The Miami Beach Urban Studios (MBUS) at FIU frequently hosts K–12 classes from the local community, which puts it in the company of other local anchor institutions, such as museums, that intersect with K–12 education in a variety of ways. While this report is primarily about 3D technology in higher education, it is not possible to talk about higher education in isolation: K–12 education is of course the pipeline to higher education. This sort of community integration is therefore valuable for fostering interest in STEAM and higher education generally among K–12 students.

Taking a class of K–12 students on a field trip, however, is logistically complicated: Permission forms must be signed, a bus must be rented, chaperones must be recruited, etc. It is in some ways easier to reverse the visiting arrangement: Instead of a class coming to the anchor institution, the anchor institution can come to the class.

Researchers at Syracuse University are pursuing this avenue. Syracuse University, unique among the participants in the Campus of the Future project, had an HP Z VR Backpack PC. This PC is similar to the HP Omen Desktop (see appendix) but is comparatively flat and designed to fit into a backpack harness that allows it to be worn while the user moves freely. Researchers at Syracuse University have conducted, and are planning more, "popup" events, where they take VR Backpack rigs to local schools or community events, or even just onto the campus quad, and allow users to experience VR environments. This serves the dual purpose of getting feedback on ongoing research and development from new users and generating interest among the community.

Addressing the Project Evaluation Questions: Learning Goals Served by 3D Technologies

The ability to support and promote shared experiences and collaboration is one of the most powerful affordances of 3D technology. And this leads to arguably the most significant outcome of the use of 3D technologies in higher education: their ability to enhance active and experiential learning.

To dramatically oversimplify, experiential learning is learning through doing and then thinking about what one has done. A critical component of experiential learning is this "metacognition," whereby learners reflect on their current understanding and further information needs.11 This, of course, generally requires an instructor to monitor the learner's reflection and self-assessment, which is not necessarily enabled by 3D technology. What is enabled by 3D technology is the experiential part of experiential learning. By dramatically expanding the range of tasks and activities with which a learner can gain hands-on experience, 3D technology can enable active and experiential learning where it may not have previously been possible. And it is precisely this ability to provide such experiences that makes 3D technologies useful for education. 3D printing can literally provide hands-on experience of things that were previously inaccessible to hands, such as molecules. AR can enable hands-on experience of things that are not physical, such as electromagnetism. And when wearing a VR headset (provided one's nonvisual sensory inputs do not conflict with the simulation), one tends to accept the simulation as a genuine experience, perceived as actually being there.

And thus here is an answer to the first of the evaluation questions motivating the Campus of the Future project: Experiential approaches to teaching and learning lend themselves to the use of 3D technologies.

The answer to the second of the evaluation questions motivating this project is more complex. What are the most effective 3D technologies for various learning goals? It almost goes without saying that it depends on the learning goal. There are of course as many learning goals as there are disciplines, as many as there are instructors, as many as there are students. And it is probably also true that the Campus of the Future project did not identify all possible uses of 3D technologies. Nevertheless, we can at least start to answer this question. Table 1 identifies some learning goals from projects at participating institutions, some 3D technologies that are effective for meeting those learning goals, and the mechanisms by which those technologies can help meet those learning goals.

Table 1. Learning goals that 3D technologies are effective in helping to meet

| Learning Goal | 3D Technology | Mechanism | |||

|---|---|---|---|---|---|

| VR | AR | 3D Scanning | 3D Printing | ||

| Develop ethical awareness | X | Simulations designed to require empathy or communal approaches to solve | |||

| Develop analytical skills | X | X | Simulations designed to structure the achievement of learning goals | ||

| Gain practice | X | X | Shared simulations | ||

| Develop strategies for collaboration | X | X | Shared simulations | ||

| Gain self-confidence in practical tasks | X | Iteration of simulated experiences | |||

| Develop scientific literacy | X | X | Interaction with objects too large or too small to interact with in the physical world | ||

| Develop artistic literacy | X | X | X | X | Interaction with materials difficult or impossible to manipulate in the physical world, and the ability to iterate designs |

| Develop spatial and 3D visualization skills | X | X | Iteration of design work | ||

| Increase student ownership of their own learning | X | X | X | X | Learning new skills to use the technology; conceptualizing one's own uses for the technology |

| Develop teaching and mentoring skills | X | X | X | Collaboration with peers on shared experiences and/or simulations | |

| Develop oral communication skills | X | X | X | X | Collaboration with others on shared experiences and/or simulations |

| Develop systems-thinking skills | X | X | X | X | Simulations designed to require mental modeling and abstraction |

Again, there are a nearly infinite number of possible learning goals, of which these are only a few. Like any technology, 3D technology has many uses, not all of which may even have been discovered yet. Still, the Campus of the Future project identified a diverse set of important learning goals for which 3D technologies are effective, across a wide range of disciplines.

Notes

-

John Dewey, Experience and Education (New York: Macmillan, 1938).

↩︎ -

Bryan Sinclair and Glenn Gunhouse, "The Promise of Virtual Reality in Higher Education," EDUCAUSE Review (March 7, 2016).

↩︎ -

Andrew Curry, "Rome Reborn," Smithsonian Magazine (June 30, 2007); Bernard Frischer, "Cultural and Digital Memory: Case Studies from the Virtual World Heritage Laboratory," in Memoria Romana: Memory in Rome and Rome in Memory, ed. Karl Galinsky (Ann Arbor: University of Michigan Press, 2014).

↩︎ -

The Sacred Centers in India project is directed by Abhishek Amar and produced by the Digital Humanities Initiative at Hamilton College with funding from the Andrew W. Mellon Foundation.

↩︎ -

"London Charter for the Computer-Based Visualisation of Cultural Heritage," Draft 2.1 (February 7, 2009); and P. Reilly, "Towards a Virtual Archaeology," in Computer Applications and Quantitative Methods in Archaeology 1990 (CAA90), eds. Sebastian Rahtz and Kris Lockyear, BAR International Series 565 (Oxford: Tempus Reparatum, 1991): 132–39.

↩︎ -

I. M. Bullock, T. Feix, and A. M. Dollar, "Human Precision Manipulation Workspace: Effects of Object Size and Number of Fingers Used," in Proc. 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (August 2015).

↩︎ -

The 1977 film Powers of Ten is famous for its visualization of scale.

↩︎ -

Phoebe Braithwaite, "Google's 3D Models Are Saving the World's Most At-Risk Heritage Sites," Wired (April 16, 2018).

↩︎ -

A creole is an emergent culture or language that arises from the mixing of populations, often in the wake of colonization. Creolization is therefore the process by which these cultures and languages emerge. In the current context, the "creolization of sculpture," it means the emergence of new forms, techniques, etc., in the wake of the mixing of the traditional and the new.

↩︎ -

S. E. Sampson and C. M. Froehle, "Foundations and Implications of a Proposed Unified Services Theory," Production and Operations Management 15, no. 2 (2006): 329–43.

↩︎ -

National Research Council, How People Learn: Brain, Mind, Experience, and School: Expanded Edition (Washington, DC: National Academies Press, 2000).

↩︎